Introduction

Deep learning is a subset of Machine Learning, a particular way for computers to learn from experience without explicitely being programmed.

To understand what deep learning is and how it works, we will discuss some examples, starting with computer vision.

Computer Vision

ImageNet

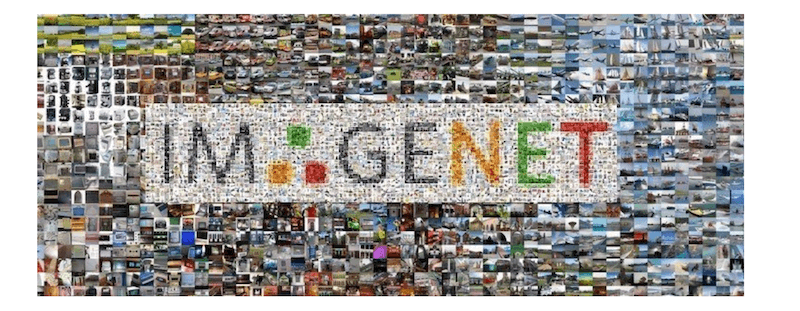

We start our journey with ImageNet, a dataset consisting of about 1,28 million labelled images, spread over 1.000 different classes. This means there are about 1000 different classes, for example:

- speedboat

- ballpoint

- stove

- trench coat

- trombone

- sea lion

- Border terrier

- …

(For a list of all the classes.)

that each have about ~1000 example images.

ILSVRC

Between 2010 and 2017, ILSVRC, the ImageNet Large Scale Visual Recognition Challenge, was a yearly competition, that brought researchers worldwide together to “compete” in predicting the class that was shown in an image.

The goal was to compare and evaluate the advances in computer vision. Everyone was given the 1,28 million images and 50.000 validation images (used to validate your own solution). About 100.000 additional images where kept secret for the final evaluation.

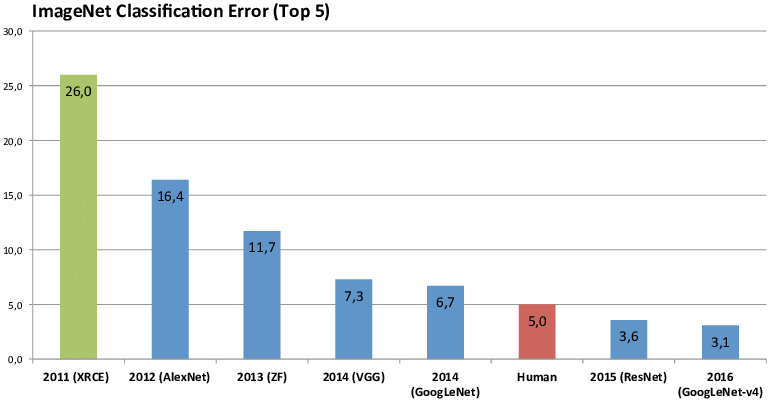

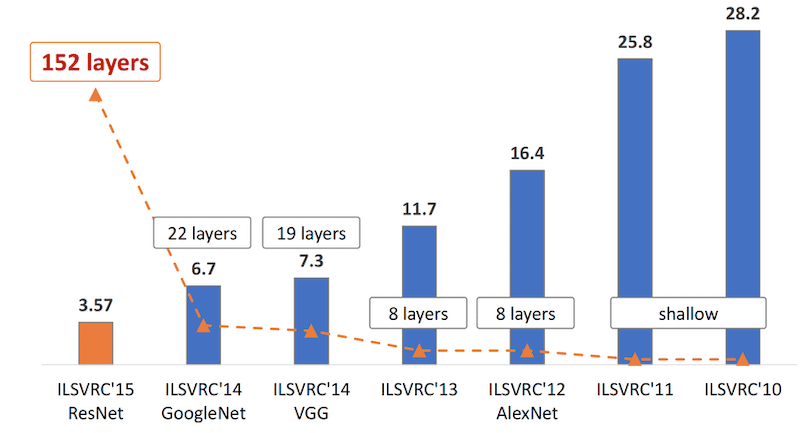

Below you see the winner results from 2011 to 2016 and their respective error percentage. Note that, a “top-5 prediction” means the correct answer was in the top-5 suggestions from “the algorithm”.

Let’s look at that chart a little closer.

In 2011, the winner was able to predict the correct class for an image in about one in four (~26% errors). The authors used a ’traditional’ computer vision approach, handcrafting a lot of functionality to detect specific traits (for example programmers writing code themselves to detect edges).

In the 2012, an entry named “AlexNet” beat the competition hands down, improving the accuracy with about 10% over the previous year. What is interesting about this approach is that is does not contain any specific logic related to the classes it was trying to predict, no handcrafted features. This is the first time deep learning made its way onto the scene.

The years after that, all winners were using deep learning to keep improving the state of the art significantly.

Human level performance

In 2015, it even started to surpass ‘human level’ performance on the ImageNet dataset. The picture below gives you an example of how you can interpret that.

Can you easily identify pug vs cupcake?

In the picture below - which is in reverse chronological order, the declining error rate bars, are overlapped with the increasing number of layers that make up the deep learning algorithm.

To understand this increase in layers, think back to stacking more and more basic building blocks on top of each other, which is also where we get the name “deep” learning from.

So what is so different about deep learning?

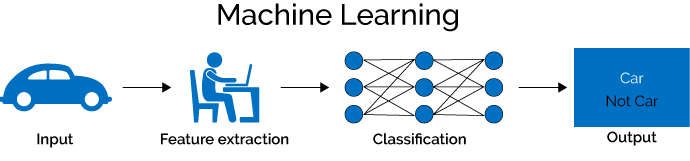

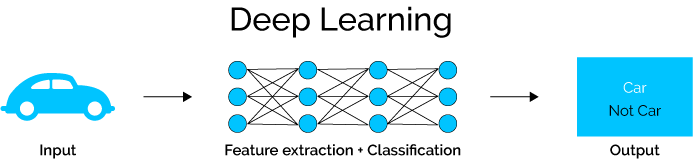

In traditional machine learning, experts use their knowledge and time to craft a set of features/characteristics that can be extracted from the input, and can be used to train a classifier to produce an output. Creating and mainting those features is time intensive and error prone.

If we compare that to how deep learning works, we see that by using lots of data samples deep learning takes care of both feature extraction and classification, we ‘simply’ pass in the inputs and outputs and the network learns both the features as well as the classificatier.

Computer Vision Tasks

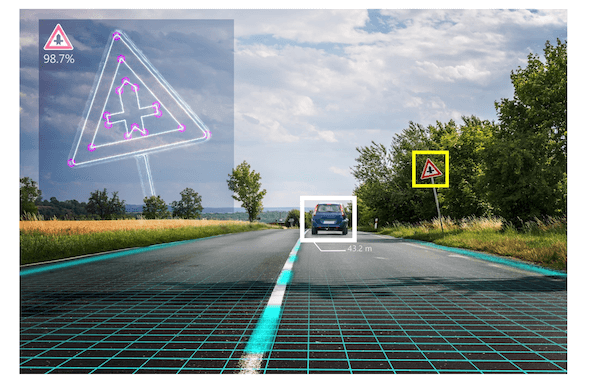

In computer vision, we distinguish a set of different applications.

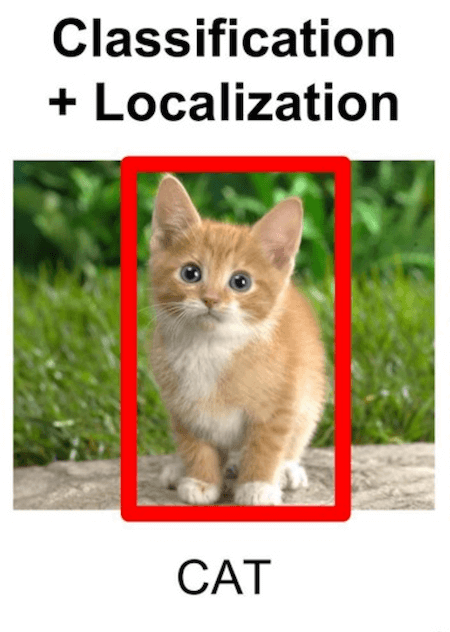

Classification

Classification tells you to what class the content of the image belongs to. In the example below, if the system has learned to recognize cats, it should label the image as containing a cat.

If you would like to get multiple labels, you can use multi-label image classification.

Localization

Classification and localization tell you which class is in the image and where that class is by predicting the bounding box.

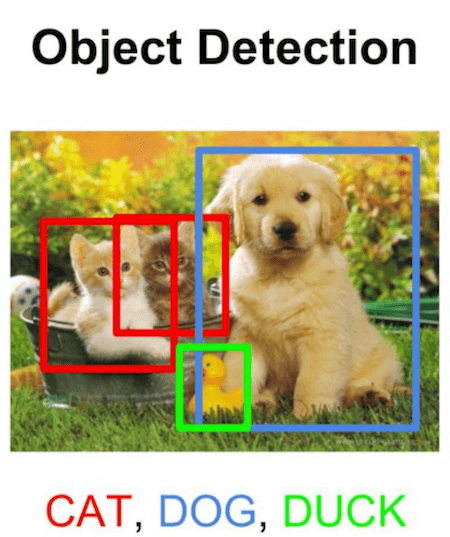

Object Detection

Object detection makes a prediction for all classes it finds in the image, together with their bounding boxes.

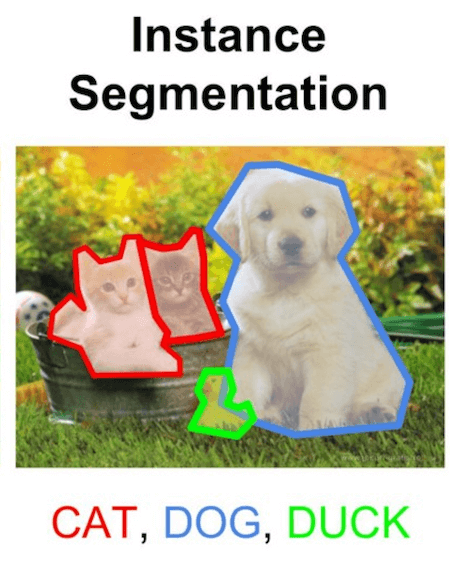

Segmentation

Segmentation predicts the classes in the picture as well as the countours of those classes. In practice this comes down to predicting a class for every pixel of the image.

Natural Language Processing

A second application we will look at is natural language processing (NLP), which focusses on how computers process and analyze large amounts of text, a skill that is quite easy for humans but very hard for computers.

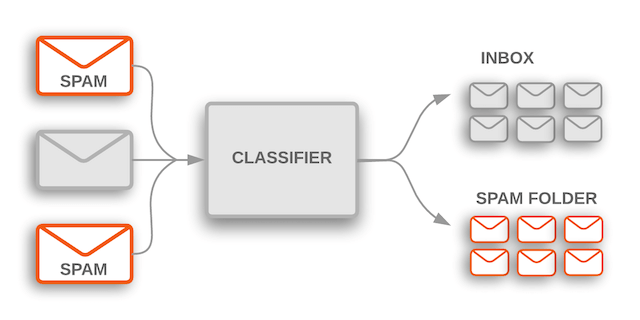

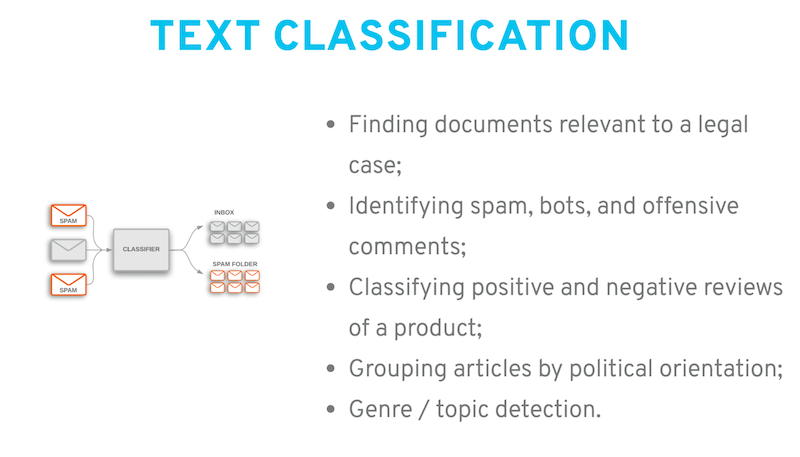

Probably one of the most well-known examples is spam classification. Given an incoming mail, should this be marked as spam or be allowed to move to the user’s inbox?

Instead of manually creating rules to check whether a mail is spam, by using deep learning we can build a classifier that learns from labelled examples of which mails are spam and which are not.

But there is a broad set of tasks that can be solved by NLP:

It can also be used to summarize documents…

extract structured data from text, or for automatic translations. When companies started offering these machine translations the results were a bit disappointing, but over time the algorithm improved, a lot - although it is not perfect. :)

Structured data

In most literature, computer vision and language processing tend to get the most attention. Structured data on the other hand gets a lot less attention, but for companies these techniques can prove to be very valuable.

A lot of companies have a lot of data in their ERP, CRM, SQL… which could be used to train models to predict when production machines will fail, how much they will be able to produce, when customers might churn or what sales to expect on a given day.

In essence

Deep Learning is:

- a subset of Machine Learning,

- which learns a hierarchy of concepts by itself,

- using a long string of mathematical operations.

(Reminder: the note so scary basics )

Where to next

This post is part of our “Artificial Intelligence - A Practical Primer” series.

Or have a look at: